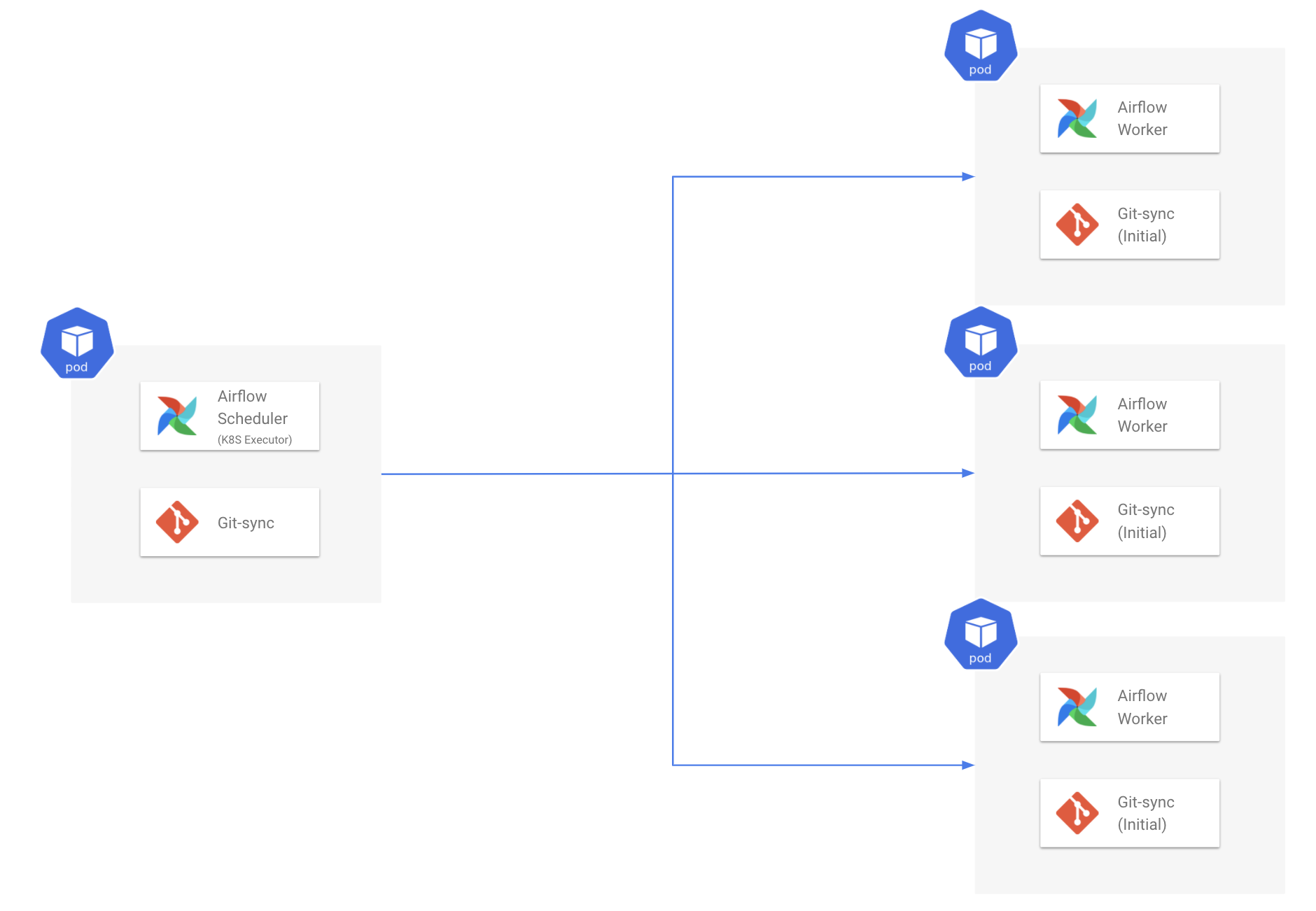

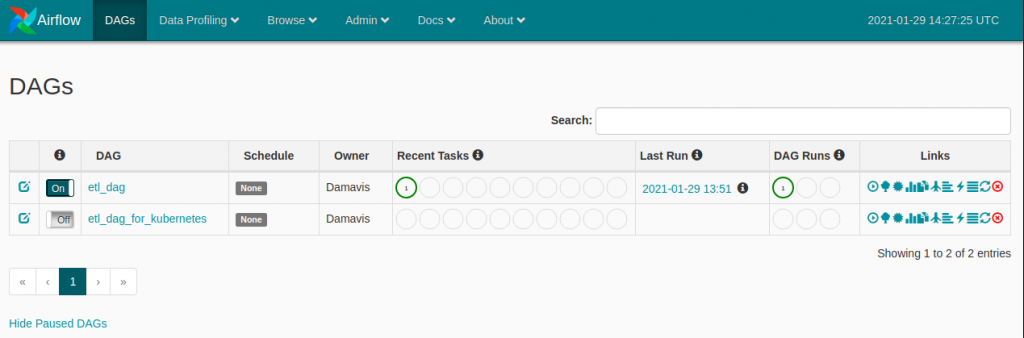

Pod_logs = context. from airflow import DAGįrom .operators.kubernetes_podįrom _operator import PythonOperatorįrom _operator import BashOperatorįrom kubernetes.client import models as k8sĬontext.xcom_push(key='input', value='Mr.CocaCola') Kubernetes operator is one of the tools designed to push automation past its. Any idea why this is happening and how to retrieve the return value inside python callable function? Thanks. With the help of Kubernetes, we provide Airflow with the ability to scale. 1 Answer Sorted by: 0 I want to to understand if the error is because of out of memory event: For the reason behind failed task instances, check the Airflow web interface > DAG's Graph View About Kubernetes Operator retries option, here 's an example, but you should first understand the reason behind failed tasks. mounting a directory for pods in kubernetes.

Mounting volume issue through KubernetesPodOperator in GKE airflow. Mounting folders with KubernetesPodOperator on Google Composer/Airflow. But when trying to pull the return value inside the pythonOperator callable function, It is returning None. How to mount volume of airflow worker to airflow kubernetes pod operator 1. Example DAG using Kubernetes Operator hellopythondag.py. When checking the task's XCom value it is showing the return_value as the the content of the return.json file in the airflow UI. This boilerplate provides an Airflow Cluster using Kubernetes Executor hosted in. Then executing a jar file in the pod and writing the output to the /airflow/xcom/return.json. In comparison, Google Kubernetes Engine operators run Kubernetes pods in a. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.I am running a kubernetes pod using airflow KubernetesPodOperator. KubernetesPodOperator launches Kubernetes pods in your environments cluster. Assuming kubectl context points to the correct kubernetes cluster, first create the kubernetes secrets that contain airflow mysql password as secrets. We’re the world’s leading provider of enterprise open source solutions-including Linux, cloud, container, and Kubernetes. KubernetesExecutors provide the free ability for users. By supplying an image URL and a command with optional arguments, the operator uses the Kube Python Client to generate a Kubernetes API request that dynamically launches those individual pod. Making sure that my kube config was correct and that I could sign into the aws cli on the wsl machine fixed. Finally, I needed to login to the image on the windows terminal and install all the dependencies for k8s. Poltak Jefferson Application version that involved in this articles: Apache Airflow 1.10.12 Airflow Helm Chart 7.9.0 Kubernetes 1.18.8 I learned some best practices from other teams how they. The Red Hat Ecosystem Catalog is the official source for discovering and learning more about the Red Hat Ecosystem of both Red Hat and certified third-party products and services. The KubernetesPodOperator uses the Kubernetes API to launch a pod in a Kubernetes cluster. Going to Docker desktop -> settings -> resources -> wsl integration and switching to the default Ubuntu distro helped fix my issue.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed